I posted a while back about how wOBA changes throughout an at bat in wOBA by Count. So I thought an interesting follow-up is whether pitchers who throw first-pitch strikes more often end up with better results the following season.

The Data

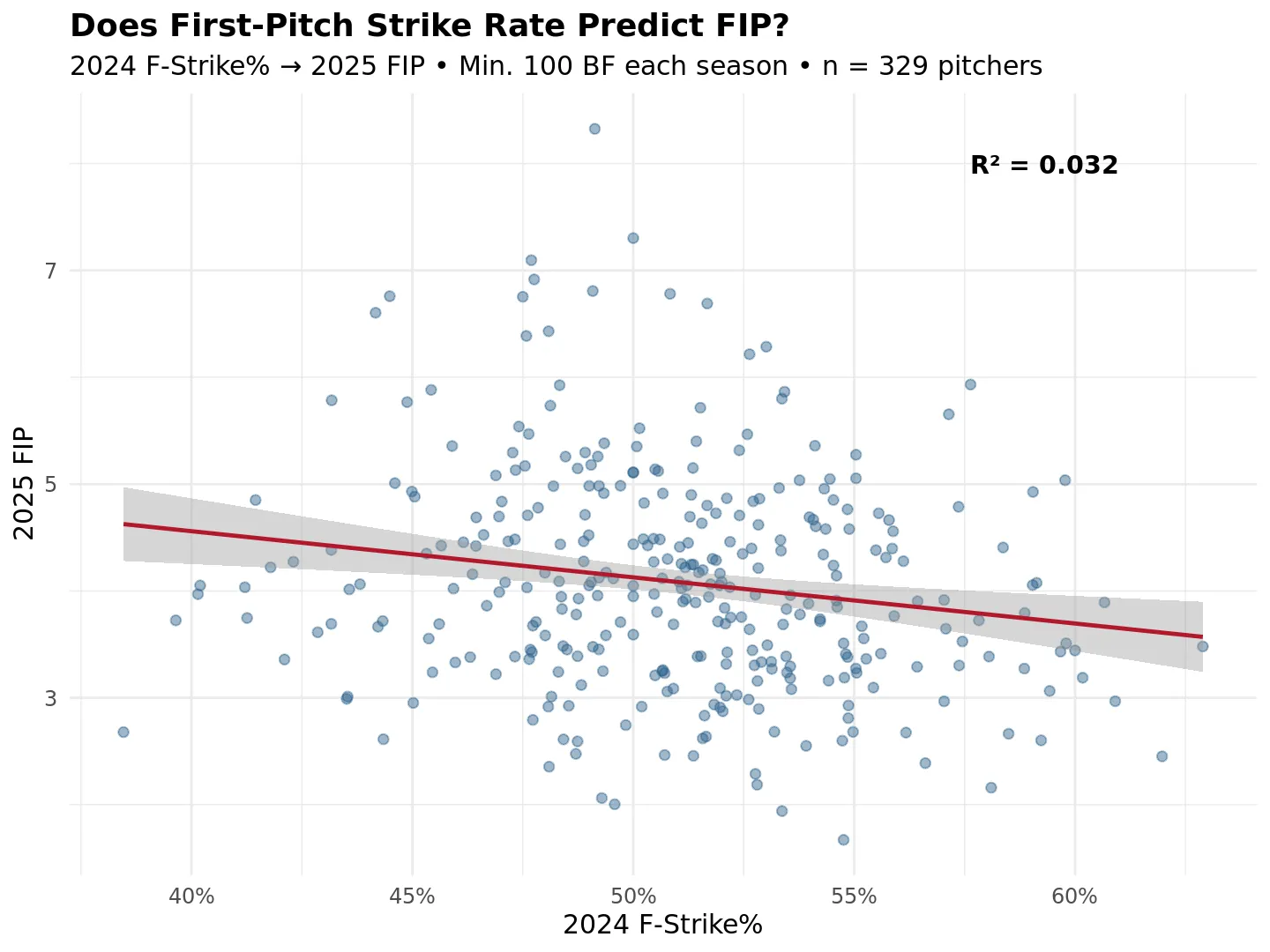

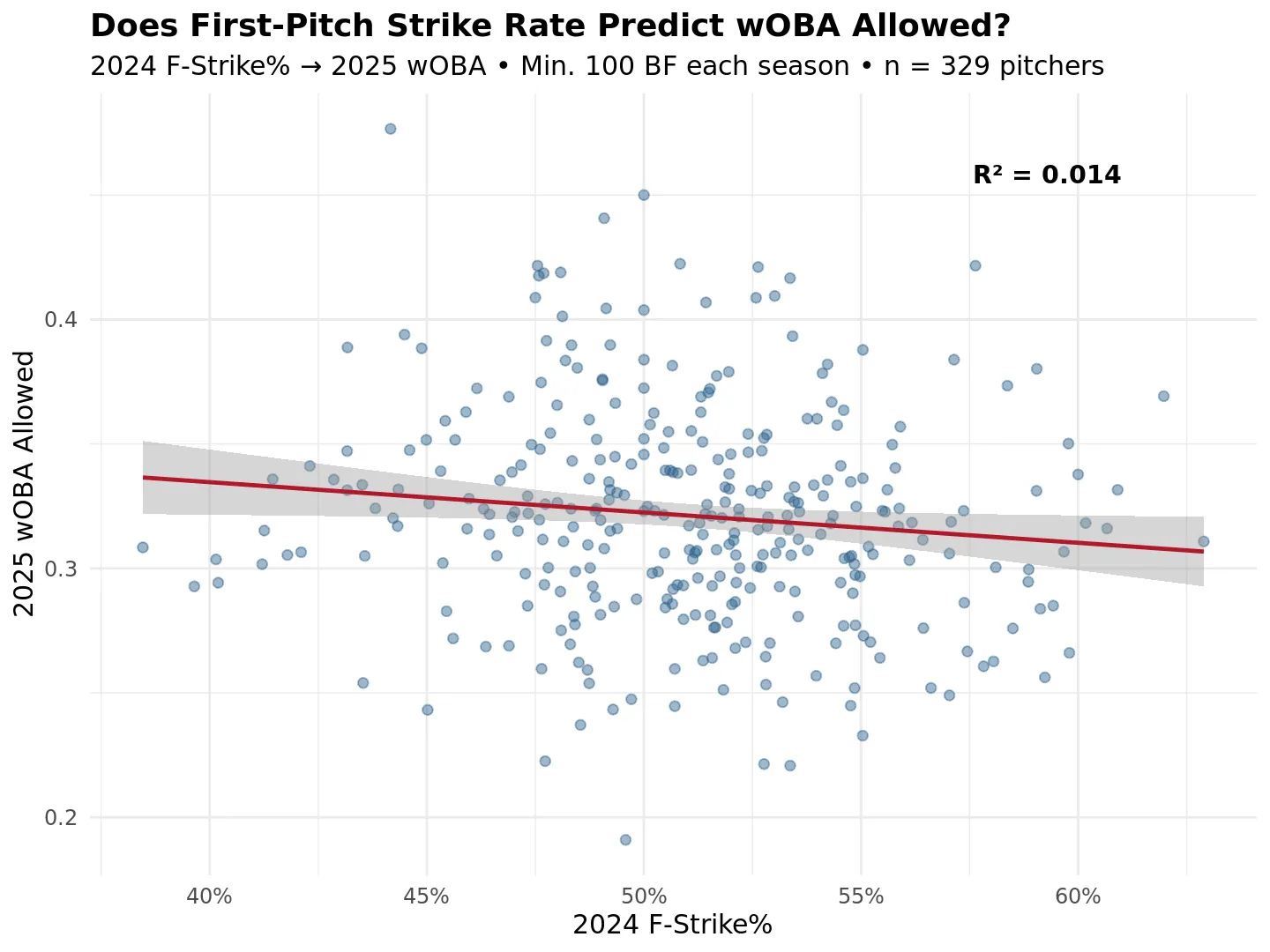

I pulled F-Strike% for pitchers who faced at least 100 batters in both 2024 and 2025, which left 329 pitchers. The predictor is their 2024 F-Strike%. The outcomes are their 2025 FIP and 2025 wOBA allowed.

FIP focuses on strikeouts, walks, and home runs (the outcomes pitchers control most directly, independent of defense). wOBA allowed includes everything: contact results, batted ball outcomes, the works. I ran scatter plots in R with F-Strike% on the x-axis and a linear regression line on each. I’m still learning R, so take the presentation for what it is.

Results

2024 F-Strike% vs. 2025 FIP: R² = 0.032.

2024 F-Strike% vs. 2025 wOBA allowed: R² = 0.014.

Is it predictive?

The direction is right in both cases. Pitchers with higher F-Strike% in 2024 tended to post slightly better numbers in 2025, but neither relationship is strong. 3.2% and 1.4% of explained variance leaves a lot of room for other factors.

Whether that means F-Strike% is genuinely not that predictive, or whether a single season of data is just noisy at the pitcher level, is hard to say from this alone. F-Strike% itself may not be especially stable year to year, which would also limit how well it can predict anything. That would be worth checking separately.

Based on these numbers, F-Strike% is probably not a useful forward-looking stat by itself. The direction makes intuitive sense to me, but R² values of 0.032 and 0.014 are low enough that you would not want to lean on it as a predictor.